Beyond Image Classification: More Ways to Apply Deep Learning

By Johanna Pingel, MathWorks

Deep learning networks are proving to be versatile tools. Originally intended for image classification, they are increasingly being applied to a wide variety of other tasks, as well. They provide accuracy and processing speed—and they enable you to perform complex analyses of large data sets without being a domain expert. Here are some examples of tasks for which you might want to consider using a deep learning network.

Text Analytics

In this example, we’ll analyze twitter data to see whether the sentiment surrounding a specific term or phrase is positive or negative. Sentiment analysis can have many practical applications, such as branding, political campaigning, and advertising.

Machine learning was (and still is) commonly used for sentiment analysis. A machine learning model can analyze individual words, but a deep learning network can be applied to complete sentences, greatly increasing its accuracy.

The training set consists of thousands of sample tweets categorized as either positive or negative. Here is a sample training tweet:

| Tweet |

Sentiment |

| “I LOVE @Health4UandPets u guys r the best!!” |

Positive |

| “@nicolerichie: your picture is very sweet” |

Positive |

| “Back to work!” |

Negative |

| “Just had the worst presentation ever!” |

Negative |

We clean the data by removing “stop words” such as “the” and “and,” which do not help the algorithm to learn. We then upload a long short-term memory (LSTM) network, a recurrent neural network (RNN) that can learn dependencies over time.

LSTMs are good for classifying sequence and time-series data. When analyzing text, an LSTM will take into account not only individual words but sentence structures and combinations of words, as well.

The MATLAB® code for the network itself is simple:

layers = [ sequenceInputLayer(inputSize)

lstmLayer(outputSize,'OutputMode','last')

fullyConnectedLayer(numClasses)

softmaxLayer

classificationLayer ]

When run on a GPU, it trains very quickly, taking just 6 minutes for 30 epochs (complete passes through the data).

Once we’ve trained the model, it can be used on new data. For example, we could use it to determine whether there is a correlation between sentiment scores and stock prices.

Speech Recognition

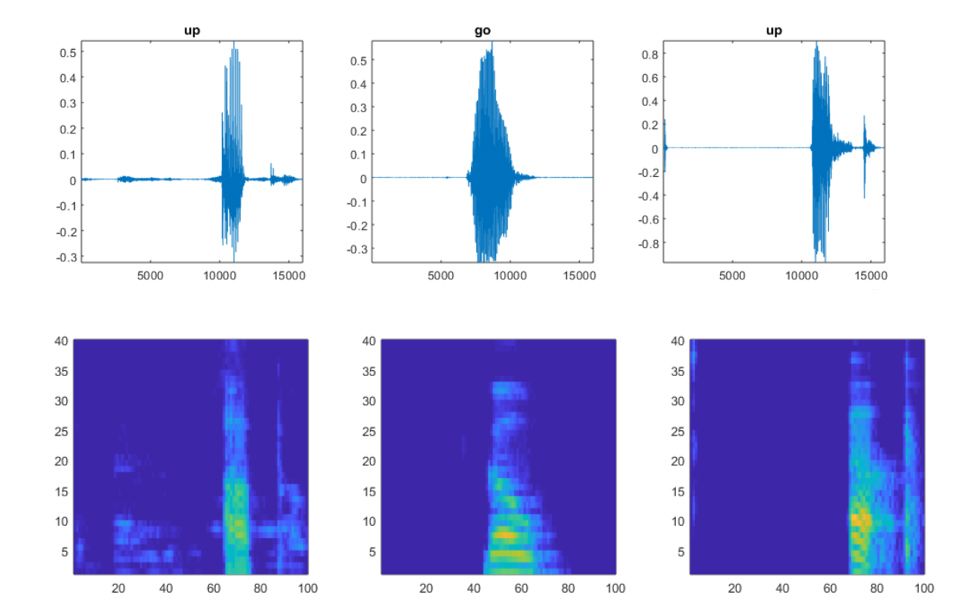

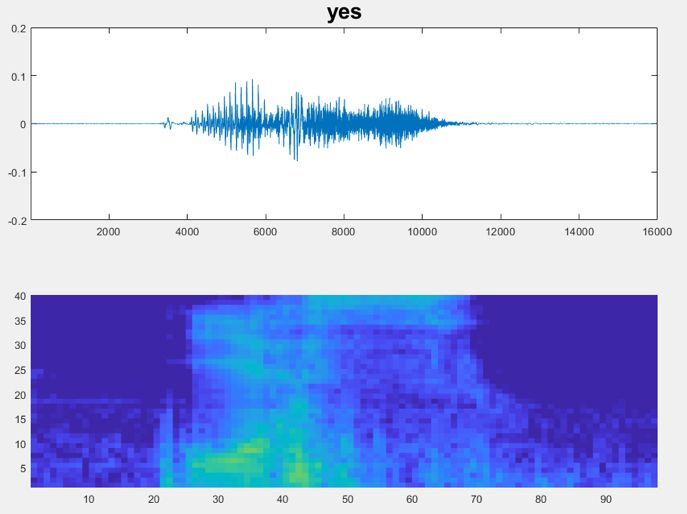

In this example, we want to classify speech audio files into their corresponding classes of words. At first glance, this problem looks completely different from image classification, but it’s actually very similar. A spectrogram is a 2D visualization of the signals in a 1D audio file (Figure 1). We can use it as input to a convolutional neural network (CNN) just as we would use a “real” image.

The spectrogram() function is a simple way of converting an audio file into its corresponding time-localized frequency. However, speech is a specialized form of audio processing, with important features localized in specific frequencies. Because we want the CNN to focus on these locations, we will use Mel-frequency cepstral coefficients, which are designed to target the areas in frequency in which speech is most relevant.

We distribute the training data evenly between the classes of words we want to classify.

To reduce false positives, we include a category for words likely to be confused with the intended categories. For example, if the intended word is “on,” then words like “mom”, “dawn”, and “won” are placed in the “unknown” category. The network does not need to know these words, just that they are not the words to recognize.

We then define a CNN. Because we are using the spectrogram as an input, the structure of our CNN can be similar to one we would use for images.

After the model has been trained, it will classify the input image (spectrogram) into the appropriate categories (Figure 2). The accuracy of the validation set is about 96%.

Image Denoising

Wavelets and filters were (and still are) common methods of denoising. In this example, we’ll see how a pretrained image denoising CNN (DnCNN) can be applied to a set of images containing Gaussian noise (Figure 3).

We start by downloading an image that has Gaussian noise.

imshow(noisyRGB);

Since this is a color image, and the network was trained on grayscale images, the only semi-tricky part of this process is to separate the images into three separate channels: red (R), green (G), and blue (B).

noisyR = noisyRGB(:,:,1); noisyG = noisyRGB(:,:,2); noisyB = noisyRGB(:,:,3);

We load the pretrained DnCNN network.

net = denoisingNetwork('dncnn');

We can now use it to remove noise from each color channel.

denoisedR = denoiseImage(noisyR,net); denoisedG = denoiseImage(noisyG,net); denoisedB = denoiseImage(noisyB,net);

We recombine the denoised color channels to form the denoised RGB image.

denoisedRGB = cat(3,denoisedR,denoisedG,denoisedB);

imshow(denoisedRGB)

title('Denoised Image')

A quick visual comparison of the original (non-noisy) image and the denoised image suggests that the result is reasonable (Figure 4).

Let’s zoom in on a few details:

rect = [120 440 130 130]; cropped_orig = imcrop(RGB,rect); cropped_denoise = imcrop(denoisedRGB,rect); imshowpair(cropped_orig,cropped_denoise,'montage');

The zoomed-in view in Figure 5 shows that the result of denoising has left a few side effects—clearly, there is more definition in the original (non-noisy) image, especially in the roof and the grass. This result might be acceptable, or the image might need further processing, depending on the application that it will be used for.

If you’re considering using a DnCNN for image denoising, bear in mind that it can only recognize the type of noise on which it’s been trained—in this case, Gaussian noise. For more flexibility, you can use MATLAB and Deep Learning Toolbox™ to train your own network using predefined layers or to train a fully custom denoising neural network.

Published 2018