Generate Reward Function from a Model Verification Block for a Water Tank System

This example shows how to automatically generate a reward function from performance requirement defined in a Simulink® Design Optimization™ model verification block. You then use the generated reward function to train a reinforcement learning agent.

Fix Random Number Stream for Reproducibility

The example code might involve computation of random numbers at several stages. Fixing the random number stream at the beginning of some sections in the example code preserves the random number sequence in the section every time you run it, which increases the likelihood of reproducing the results. For more information, see Results Reproducibility.

Fix the random number stream with seed 0 and random number algorithm Mersenne Twister. For more information on controlling the seed used for random number generation, see rng.

previousRngState = rng(0,"twister");The output previousRngState is a structure that contains information about the previous state of the stream. You will restore the state at the end of the example.

Introduction

You can use the generateRewardFunction to generate a reward function for reinforcement learning, starting from performance constraints specified in a Simulink Design Optimization model verification block. The resulting reward signal is a sum of weighted penalties on constraint violations by the current state of the environment.

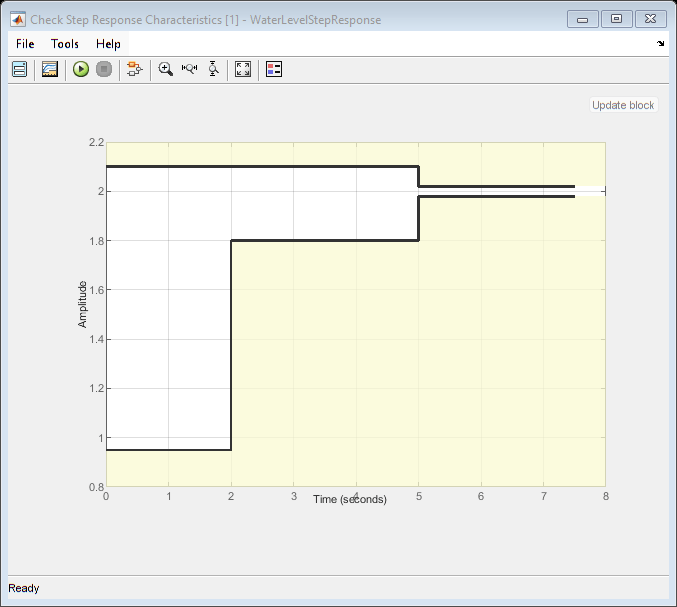

In this example, you will convert the cost and constraint specifications defined in a Check Step Response Characteristics block for a water tank system into a reward function. You then use the reward function and use it to train an agent to control the water tank.

Specify parameters for this example.

% Water tank parameters a = 2; b = 5; A = 20; % Initial and final height h0 = 1; hf = 2; % Simulation and sample times Tf = 10; Ts = 0.1;

For more information on the original model for this example, see watertank Simulink Model (Simulink Control Design).

Open the model.

mdl = "rlWatertankStepInput";

open_system(mdl)

The model in this example has been modified for reinforcement learning. The goal is to control the level of the water in the tank using a reinforcement learning agent, while satisfying the response characteristics defined in the Check Step Response Characteristics block. For more information, see Check Step Response Characteristics (Simulink Design Optimization).

Open the block to view the desired step response specifications.

verifBlk = mdl + "/WaterLevelStepResponse";

open_system(verifBlk)

Generate a Reward Function

Generate the reward function code from specifications in the WaterLevelStepResponse block using generateRewardFunction. The code is displayed in the MATLAB Editor.

generateRewardFunction(blk)

The generated reward function is a starting point for reward design. You might modify the function by choosing different penalty functions and tuning the penalty weights. For this example, make the following change to the generated code:

The default penalty weight is

1. Set weight to10.The default exterior penalty function method is step. Change the method to

quadratic.

After you make changes, the weight and penalty specifications should be as follows:

Weight = 10;

Penalty = sum(exteriorPenalty(x,Block1_xmin,Block1_xmax,'quadratic'));

For this example, the modified code has been saved in the MATLAB® function file rewardFunctionVfb.m. Display the generated reward function.

type rewardFunctionVfb.mfunction reward = rewardFunctionVfb(x,t)

% REWARDFUNCTION generates rewards

% from Simulink block specifications.

%

% x : Input of

% watertank_stepinput_rl/WaterLevelStepResponse

% t : Simulation time (s)

% Reinforcement Learning Toolbox

% 26-Apr-2021 13:05:16

%#codegen

%% Specifications from

% watertank_stepinput_rl/WaterLevelStepResponse

Block1_InitialValue = 1;

Block1_FinalValue = 2;

Block1_StepTime = 0;

Block1_StepRange = Block1_FinalValue - ...

Block1_InitialValue;

Block1_MinRise = Block1_InitialValue + ...

Block1_StepRange * 80/100;

Block1_MaxSettling = Block1_InitialValue + ...

Block1_StepRange * (1+2/100);

Block1_MinSettling = Block1_InitialValue + ...

Block1_StepRange * (1-2/100);

Block1_MaxOvershoot = Block1_InitialValue + ...

Block1_StepRange * (1+10/100);

Block1_MinUndershoot = Block1_InitialValue - ...

Block1_StepRange * 5/100;

if t >= Block1_StepTime

if Block1_InitialValue <= Block1_FinalValue

Block1_UpperBoundTimes = [0,5; 5,max(5+1,t+1)];

Block1_UpperBoundAmplitudes = [Block1_MaxOvershoot, ...

Block1_MaxOvershoot; ...

Block1_MaxSettling, ...

Block1_MaxSettling];

Block1_LowerBoundTimes = [0,2; 2,5; 5,max(5+1,t+1)];

Block1_LowerBoundAmplitudes = [Block1_MinUndershoot, ...

Block1_MinUndershoot; ...

Block1_MinRise, ...

Block1_MinRise; ...

Block1_MinSettling, ...

Block1_MinSettling];

else

Block1_UpperBoundTimes = [0,2; 2,5; 5,max(5+1,t+1)];

Block1_UpperBoundAmplitudes = [Block1_MinUndershoot, ...

Block1_MinUndershoot; ...

Block1_MinRise, ...

Block1_MinRise; ...

Block1_MinSettling, ...

Block1_MinSettling];

Block1_LowerBoundTimes = [0,5; 5,max(5+1,t+1)];

Block1_LowerBoundAmplitudes = [Block1_MaxOvershoot, ...

Block1_MaxOvershoot; ...

Block1_MaxSettling, ...

Block1_MaxSettling];

end

Block1_xmax = zeros(1,size(Block1_UpperBoundTimes,1));

for idx = 1:numel(Block1_xmax)

tseg = Block1_UpperBoundTimes(idx,:);

xseg = Block1_UpperBoundAmplitudes(idx,:);

Block1_xmax(idx) = interp1(tseg,xseg,t,'linear',NaN);

end

if all(isnan(Block1_xmax))

Block1_xmax = Inf;

else

Block1_xmax = max(Block1_xmax,[],'omitnan');

end

Block1_xmin = zeros(1,size(Block1_LowerBoundTimes,1));

for idx = 1:numel(Block1_xmin)

tseg = Block1_LowerBoundTimes(idx,:);

xseg = Block1_LowerBoundAmplitudes(idx,:);

Block1_xmin(idx) = interp1(tseg,xseg,t,'linear',NaN);

end

if all(isnan(Block1_xmin))

Block1_xmin = -Inf;

else

Block1_xmin = max(Block1_xmin,[],'omitnan');

end

else

Block1_xmin = -Inf;

Block1_xmax = Inf;

end

%% Penalty function weight (specify nonnegative)

Weight = 10;

%% Compute penalty

% Penalty is computed for violation

% of linear bound constraints.

%

% To compute exterior bound penalty,

% use the exteriorPenalty function and

% specify the penalty method

% as 'step' or 'quadratic'.

%

% Alternaltely, use the hyperbolicPenalty

% or barrierPenalty function for

% computing hyperbolic and barrier penalties.

%

% For more information, see help for these functions.

Penalty = sum(exteriorPenalty( ...

x,Block1_xmin,Block1_xmax, ...

'quadratic'));

%% Compute reward

reward = -Weight * Penalty;

end

To integrate this reward function in the water tank model, open the MATLAB Function block under the Reward Subsystem.

open_system(mdl + "/Reward/Reward Function")Append the function with the following line of code and save the model.

r = rewardFunctionVfb(x,t);

The MATLAB Function block will now execute rewardFunctionVfb.m for computing rewards.

For this example, the MATLAB Function block has already been modified and saved.

Create a Reinforcement Learning Environment

The environment dynamics are modeled in the Water-Tank Subsystem. For this environment,

The observations are the reference height

reffrom the last 5 time steps, and the height error iserr=ref-H.The action is the voltage

Vapplied to the pump.The sample time

Tsis0.1s.

Create observation and action specifications for the environment.

numObs = 6; numAct = 1; oinfo = rlNumericSpec([numObs 1]); ainfo = rlNumericSpec([numAct 1]);

Create the reinforcement learning environment using the rlSimulinkEnv function.

agentBlk = mdl + "/RL Agent";

env = rlSimulinkEnv(mdl, agentBlk, oinfo, ainfo);Create a Reinforcement Learning Agent

The agent used in this example is a twin-delayed deep deterministic policy gradient (TD3) agent. TD3 agents use two parameterized Q-value function approximators to estimate the value (that is, the expected cumulative long-term reward) of the policy. To model the parameterized Q-value function within both critics, use a neural network with two inputs (the observation and action) and one output (the value of the policy when taking a given action from the state corresponding to a given observation). For more information on TD3 agents, see Twin-Delayed Deep Deterministic (TD3) Policy Gradient Agent.

When you create the agent, the initial parameters of the actor and critic networks are initialized with random values. Fix the random number stream so that the agent is always initialized with the same parameter values.

rng(0,"twister");Define each network path as an array of layer objects. Assign names to the input and output layers of each path. These names allow you to connect the paths and then later explicitly associate the network input and output layers with the appropriate environment channel.

mainPath = [

featureInputLayer(numObs)

fullyConnectedLayer(128)

concatenationLayer(1, 2, Name="concat")

reluLayer()

fullyConnectedLayer(128)

reluLayer()

fullyConnectedLayer(1)];

actionPath = [

featureInputLayer(numAct)

fullyConnectedLayer(8, Name="fc_act")];

Create a dlnetwork object and add layers.

criticNet = dlnetwork(); criticNet = addLayers(criticNet,mainPath); criticNet = addLayers(criticNet, actionPath);

Connect the layers.

criticNet = connectLayers(criticNet, "fc_act", "concat/in2");

Plot the critic network structure.

plot(criticNet);

Display the number of weights.

summary(initialize(criticNet));

Initialized: true

Number of learnables: 18.6k

Inputs:

1 'input' 6 features

2 'input_1' 1 features

Create the critic function objects using rlQValueFunction. The critic function object encapsulates the critic by wrapping around the critic deep neural network. To make sure the critics have different initial weights, explicitly initialize each network before using them to create the critics.

critic1 = rlQValueFunction(initialize(criticNet), oinfo, ainfo); critic2 = rlQValueFunction(initialize(criticNet), oinfo, ainfo);

TD3 agents learn a parameterized deterministic policy over continuous action spaces, which is learned by a continuous deterministic actor. This actor takes the current observation as input and returns as output an action that is a deterministic function of the observation.

To model the parameterized policy within the actor, use a neural network with one input layer (which receives the content of the environment observation channel, as specified by obsInfo) and one output layer (which returns the action to the environment action channel, as specified by ainfo).

Define the network as an array of layer objects.

actorNet = [

featureInputLayer(numObs)

fullyConnectedLayer(128)

reluLayer()

fullyConnectedLayer(128)

reluLayer()

fullyConnectedLayer(numAct)

];Convert to dlnetwork object, initialize network and display the number of weights.

actorNet = dlnetwork(actorNet); actorNet = initialize(actorNet); summary(actorNet)

Initialized: true

Number of learnables: 17.5k

Inputs:

1 'input' 6 features

Plot the actor network.

plot(actorNet);

Create a deterministic actor function that is responsible for modeling the policy of the agent. For more information, see rlContinuousDeterministicActor.

actordlNet = initialize(actorNet); actor = rlContinuousDeterministicActor(actordlNet, oinfo, ainfo);

Specify the agent options using rlTD3AgentOptions.

Use the experience buffer length of 1e6 for storing experiences. A larger experience buffer can store a diverse set of experiences.

Use mini-batches of 512 experiences for learning. Smaller mini-batches are computationally efficient but might introduce variance in training. By contrast, larger batch sizes can make the training stable but require higher memory.

Use a discount factor of 0.99 that favors long term rewards.

Use 1e3 warm start steps to initially delay the learning process.

agentOpts = rlTD3AgentOptions( ... SampleTime=Ts, ... DiscountFactor=0.99, ... ExperienceBufferLength=1e6, ... NumWarmStartSteps=1e3, ... MiniBatchSize=512);

The exploration model in this TD3 agent is a Gaussian noise model. During training the agent explores by sampling from this noise distribution.

Set the initial standard deviation of the noise to

0.3.Set a decay rate of 1e-5 for the standard deviation parameter. This promotes exploration toward the beginning when the agent does not have a good policy, and exploitation toward the end when the agent has learned the optimal policy.

agentOpts.ExplorationModel.StandardDeviation = 0.3; agentOpts.ExplorationModel.StandardDeviationDecayRate = 1e-5;

Specify the learn rate of 1e-3 for the actor, and 2e-3 for the critic models of the agent. Use a gradient threshold value of 10 for both actor and critics to stabilize gradients during learning.

agentOpts.ActorOptimizerOptions.LearnRate = 1e-3; agentOpts.ActorOptimizerOptions.GradientThreshold = 10; for i = 1:2 agentOpts.CriticOptimizerOptions(i).LearnRate = 2e-3; agentOpts.CriticOptimizerOptions(i).GradientThreshold = 10; end

Create the TD3 agent using the actor and critic representations. For more information on TD3 agents, see rlTD3Agent.

agent = rlTD3Agent(actor, [critic1, critic2], agentOpts); agent.ExperienceBuffer = rlPrioritizedReplayMemory(oinfo,ainfo);

Closed Loop Response

Simulate the model to view the closed loop step response of the untrained agent. The response does not satisfy the performance bounds.

sim(mdl);

Train the Agent

You will train the agent to satisfy the performance constraints. First specify the training options using rlTrainingOptions. For this example, use the following options:

Run training for a maximum of 500 episodes, with each episode lasting at most

ceil(Tf/Ts)time steps, where the total simulation timeTfis10s.Evaluate the performance of the greedy policy by running 1 evaluation episode every 10 training episodes.

Stop the training when the evaluation score reaches 0. At this point the agent is able to satisfy the performance constraints.

trainOpts = rlTrainingOptions( ... MaxEpisodes=500, ... MaxStepsPerEpisode=ceil(Tf/Ts), ... StopTrainingCriteria="EvaluationStatistic", ... StopTrainingValue=0, ... ScoreAveragingWindowLength=20); evl = rlEvaluator( ... NumEpisodes=1, ... EvaluationFrequency=10);

Fix the random stream for reproducibility.

rng(0,"twister");Train the agent using the train function. Training this agent is a computationally intensive process that might take several minutes to complete. To save time while running this example, load a pretrained agent by setting doTraining to false. To train the agent yourself, set doTraining to true.

doTraining =false; if doTraining trainingStats = train(agent, env, trainOpts, Evaluator=evl); else load("rlWatertankTD3Agent.mat") end

A snapshot of the training progress is shown in the following figure. You can expect different results due to inherent randomness in the training process.

Closed Loop Response of Trained Agent

Simulate the model to view the closed loop step response. The reinforcement learning agent is able to track the reference height while satisfying the step response constraints.

sim(mdl);

Restore the random number stream using the information stored in previousRngState.

rng(previousRngState);

See Also

Functions

Objects

Blocks

Topics

- Tune Single PI Controller Gains For Multiple Operating Points Using Reinforcement Learning

- Train Biped Robot to Walk Using Reinforcement Learning Agents

- Generate Reward Function from a Model Predictive Controller for a Servomotor

- Define Observation and Reward Signals in Custom Environments

- Water Tank Custom Simulink Environment for Reinforcement Learning

- Twin-Delayed Deep Deterministic (TD3) Policy Gradient Agent

- Train Reinforcement Learning Agents