Streaming Pixel Interface

What Is a Streaming Pixel Interface?

In hardware, processing an entire frame of video at one time has a high cost in memory and area. To save resources and reduce the latency of the design, serial processing is preferable in HDL designs. Vision HDL Toolbox™ blocks and System objects operate on a pixel, line, or neighborhood rather than a frame. The blocks and objects accept and generate video data as a serial stream of pixel data and control signals. The control signals indicate the relative location of each pixel within the image or video frame. The protocol mimics the timing of a video system, including inactive intervals between frames. Each block or object operates without full knowledge of the image format, and can tolerate imperfect timing of lines and frames.

All Vision HDL Toolbox blocks and System objects support single pixel streaming (with 1 pixel per cycle). Some blocks and System objects also support multipixel streaming (with 2, 4, or 8 pixels per cycle) for high-rate or high-resolution video. Multipixel streaming increases hardware resources to support higher video resolutions with the same hardware clock rate as a smaller resolution video. HDL code generation for multipixel streaming is not supported with System objects. Use the equivalent blocks to generate HDL code for multipixel algorithms.

How Does a Streaming Pixel Interface Work?

Video capture systems scan video signals from left to right and from top to bottom. As these systems scan, they generate inactive intervals between lines and frames of active video.

The horizontal blanking interval is made up of the inactive cycles between the end of one line and the beginning of the next line. This interval is often split into two parts: the front porch and the back porch. These terms come from the synchronize pulse between lines in analog video waveforms. The front porch is the number of samples between the end of the active line and the synchronize pulse. The back porch is the number of samples between the synchronize pulse and the start of the active line.

The vertical blanking interval is made up of the inactive cycles between the ending active line of one frame and the starting active line of the next frame.

The scanning pattern requires start and end signals for both horizontal and vertical directions. The Vision HDL Toolbox streaming pixel protocol includes the blanking intervals, and allows you to configure the size of the active and inactive frame.

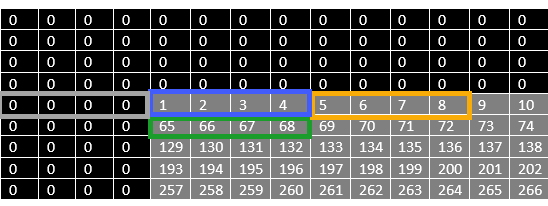

In the frame diagram, the blue shaded area to the left and right of the active frame indicates the horizontal blanking interval. The orange shaded area above and below the active frame indicates the vertical blanking interval. For more information on blanking intervals, see Configure Blanking Intervals.

Why Use a Streaming Pixel Interface?

Format Independence

The blocks and objects using this interface do not need a configuration option for the exact image size or the size of the inactive regions. In addition, if you change the image format for your design, you do not need to update each block or object. Instead, update the image parameters once at the serialization step. Some blocks and objects still require a line buffer size parameter to allocate memory resources.

By isolating the image format details, you can develop a design using a small image for faster simulation. Then once the design is correct, update to the actual image size.

Error Tolerance

Video can come from various sources such as cameras, tape storage, digital storage, or switching and insertion gear. These sources can introduce timing problems. Human vision cannot detect small variance in video signals, so the timing for a video system does not need to be perfect. Therefore, video processing blocks must tolerate variable timing of lines and frames.

By using a streaming pixel interface with control signals, each Vision HDL Toolbox block or object starts computation on a fresh segment of pixels at the start-of-line or start-of-frame signal. The computation occurs whether or not the block or object receives the end signal for the previous segment.

The protocol tolerates minor timing errors. If the number of valid and invalid cycles between start signals varies, the blocks or objects continue to operate correctly. Some Vision HDL Toolbox blocks and objects require minimum horizontal blanking regions to accommodate memory buffer operations. For more information, see Configure Blanking Intervals.

Pixel Stream Conversion Using Blocks and System Objects

In Simulink®, use the Frame To Pixels block to convert framed

video data to a stream of pixels and control signals that conform to this protocol. The

control signals are grouped in a nonvirtual bus data type called

pixelcontrol. You can configure the block to return a pixel

stream with 1, 2, 4, or 8 pixels per cycle.

In MATLAB®, use the visionhdl.FrameToPixels object to

convert framed video data to a stream of pixels and control signals that conform to this

protocol. The control signals are grouped in a structure data type. You can configure

the object to create a pixel stream with 1, 2, 4, or 8 pixels per

cycle.

If your input video is already in a serial format, you can design your own logic to

generate pixelcontrol control signals from your existing serial

control scheme. For example, see Convert Camera Control Signals to pixelcontrol Format and Integrate Vision HDL Blocks into Camera Link System.

Supported Pixel Data Types

Vision HDL Toolbox blocks and objects include ports or arguments for streaming pixel data. Each block and object supports one or more pixel formats. The supported formats vary depending on the operation the block or object performs. This table details common video formats supported by Vision HDL Toolbox.

| Type of Video | Pixel Format |

|---|---|

| Binary | Each pixel is represented by a single

boolean or logical

value. Used for true black-and-white video. |

| Grayscale | Each pixel is represented by luma, which is the gamma-corrected luminance value. This pixel is a single unsigned integer or fixed-point value. |

| Color | Each pixel is represented by 3 or 4 unsigned integer or fixed-point values representing the color components of the pixel. Vision HDL Toolbox blocks and objects use gamma-corrected color spaces, such as R'G'B' and Y'CbCr. To process

multicomponent streams for blocks that do not support

multicomponent input, replicate the block for each

component. The To set up multipixel streaming for color video, you can configure the Frame To Pixels block to return a multicomponent and multipixel stream. See MultiPixel-MultiComponent Video Streaming. |

Vision HDL Toolbox blocks have an input or output port, pixel, for the

pixel data. Vision HDL Toolbox System objects expect or return an argument representing the pixel

data. The following table describes the format of the pixel data.

| Port or Argument | Description | Data Type |

|---|---|---|

pixel |

You can simulate System objects with a multipixel streaming interface, but you cannot generate HDL code for System objects that use multipixel streams. To generate HDL code for multipixel algorithms, use the equivalent Simulink blocks. | Supported data types can include:

The software supports |

Note

The blocks in this table support multipixel input, but not multicomponent pixels. The table shows what number of input pixels each block supports.

| Block | Number of pixels |

|---|---|

| Image Filter | 2, 4, or 8 |

| Bilateral Filter | 2, 4, or 8 |

| Line Buffer | 2, 4, or 8 |

| Gamma Corrector | 2, 4, or 8 |

| Edge Detector | 2, 4, or 8 |

| Median Filter | 2, 4, or 8 |

| Histogram | 2, 4, or 8 |

| Lookup Table | 2, 4, or 8 |

| Image Statistics | 2, 4, or 8 |

| Binary morphology: Closing, Dilation, Erosion, and Opening | 4 or 8 |

| Grayscale morphology: Grayscale Dilation | 2, 4, or 8 |

These blocks support multipixel-multicomponent pixel streams. The table shows what number of pixels and components each block supports.

| Block | Number of pixels | Number of components |

|---|---|---|

| Pixel Stream FIFO | 2, 4, or 8 | 1, 3, or 4 |

| Color Space Converter | 2, 4, or 8 | 3 |

| Chroma Resampler | 2, 4, or 8 | 3 |

| Demosaic Interpolator | 2, 4 or 8 | 3 (output only) |

| ROI Selector | 2, 4, or 8 | 1, 3, or 4 |

| Pixel Stream Aligner | 2, 4, or 8 | 1, 3, or 4 |

Streaming Pixel Control Signals

Vision HDL Toolbox blocks and objects include ports or arguments for control signals relating to each pixel. These five control signals indicate the validity of a pixel and its location in the frame. For multipixel streaming, each vector of pixel values has one set of control signals.

In Simulink, the control signal port is a nonvirtual bus data type called

pixelcontrol. For details of the bus data type, see Pixel Control Bus.

In MATLAB, the control signal argument is a structure. For details of the structure data type, see Pixel Control Structure.

Sample Time

Because the Frame To Pixels block creates a serial stream of the pixels of each input frame, the sample time of your video source must match the total number of pixels in the frame. The total number of pixels is Total pixels per line × Total video lines, so set the sample time to this value.

If your frame size is large, you may reach the fixed-step solver step size limit for sample times in Simulink, and receive an error like this.

The computed fixed step size (1.0) is 1000000.0 times smaller than all the discrete sample times in the model.

Timing Diagram of Single Pixel Serial Interface

To illustrate the streaming pixel protocol, this example converts a frame to a sequence of control and data signals. Consider a 2-by-3 pixel image. To model the blanking intervals, configure the serialized image to include inactive pixels in these areas around the active image:

1-pixel-wide back porch

2-pixel-wide front porch

1 line before the first active line

1 line after the last active line

You can configure the dimensions of the active and inactive regions with

the Frame To Pixels block or the visionhdl.FrameToPixels object.

In the figure, the active image area is in the dashed rectangle, and the inactive pixels surround it. The pixels are labeled with their grayscale values.

![]()

The block or object serializes the image from left to right, one line at a time. The timing diagram shows the control signals and pixel data that correspond to this image, which is the serial output of the Frame To Pixels block for this frame, configured for single-pixel streaming.

![]()

For an example using the Frame to Pixels block to serialize an image, see Design Video Processing Algorithms for HDL in Simulink.

For an example using the FrameToPixels object to

serialize an image, see Design Hardware-Targeted Image Filters in MATLAB.

Timing Diagram of Multipixel Serial Interface

This example converts a frame to a multipixel stream with 4 pixels per cycle and corresponding control signals. Consider a 64-pixel-wide frame with these inactive areas around the active image.

4-pixel-wide back porch

4-pixel-wide front porch

4 lines before the first active line

4 lines after the last active line

The Frame to Pixels block configured for multipixel streaming returns pixel vectors formed from the pixels of each line in the frame from left to right. This diagram shows the top-left corner of the frame. The gray pixels show the active area of the frame, and the zero-value pixels represent blanking pixels. The label on each active pixel represents the location of the pixel in the frame. The highlighted boxes show the sets of pixels streamed on one cycle. The pixels in the inactive region are also streamed four at a time. The gray box shows the four blanking pixels streamed the cycle before the start of the active frame. The blue box shows the four pixel values streamed on the first valid cycle of the frame, and the orange box shows the four pixel values streamed on the second valid cycle of the frame. The green box shows the first four pixels of the next active line.

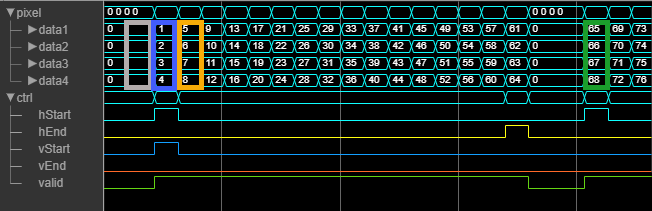

This waveform shows the multipixel streaming data and control signals for the first

line of the same frame, streamed with 4 pixels per cycle. The

pixelcontrol signals that apply to each set of four pixel values

are shown below the data signals. Because the vector has only one

valid signal, the pixels in the vector are either all valid or

all invalid. The hStart and vStart signals apply

to the pixel with the lowest index in the vector. The hEnd and

vEnd signals apply to the pixel with the highest index in the

vector.

Prior to the time period shown, the initial vertical blanking pixels are streamed four

at a time, with all control signals set to false. This waveform shows

the pixel stream of the first line of the image. The gray, blue, and orange boxes

correspond to the highlighted areas of the frame diagram. After the first line is

complete, the stream has two cycles of horizontal blanking that contains 8 invalid

pixels (front and back porch). Then, the waveform shows the next line in the stream,

starting with the green box.

For an example model that uses multipixel streaming, see Filter Multipixel Video Streams.

See Also

Frame To Pixels | Pixels To Frame | visionhdl.FrameToPixels | visionhdl.PixelsToFrame