groundingDinoObjectDetector

Description

Add-On Required: This feature requires the Computer Vision Toolbox Model for Grounding DINO Object Detection add-on.

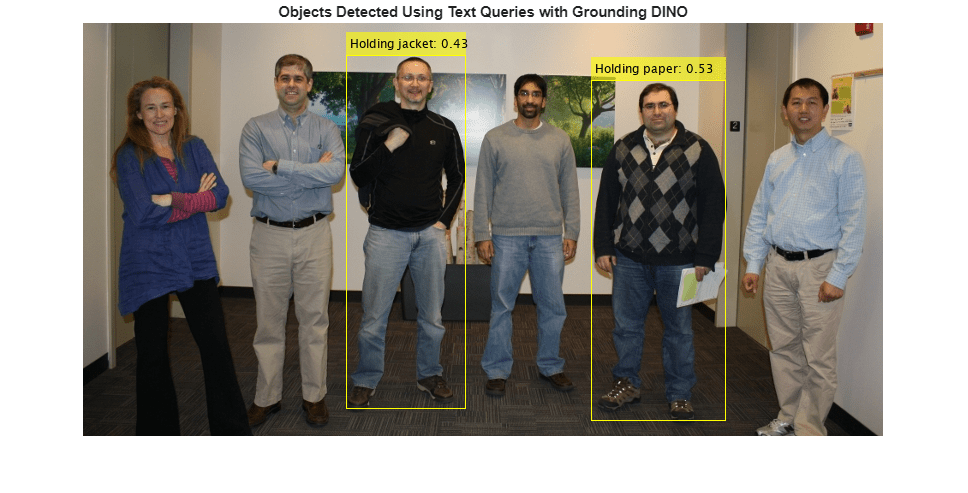

The groundingDinoObjectDetector object creates a Grounding DINO vision-language

object detector for zero-shot object detection. This detector identifies and localizes

arbitrary objects in an image using natural language descriptions (text prompts). Use the

groundingDinoObjectDetector object to create a pretrained Grounding DINO object detector

with either a Swin-Tiny or Swin-Base backbone network. You can then use the

detect function of the groundingDinoObjectDetector object to detect

objects in an unknown image. This feature also requires the Deep Learning Toolbox™ license.

Creation

Syntax

Description

detector = groundingDinoObjectDetector

detector = groundingDinoObjectDetector(name)name.

detector = groundingDinoObjectDetector(___,Name=Value)ClassNames and ClassDescriptions properties

of the object detector.

You can use the ClassDescriptions property to provide natural

language queries for detection and the ClassNames property to assign

output labels for annotation. The ClassNames property is required.

If you specify only ClassNames, the object uses its value for both

detection and annotation. You can specify ClassDescriptions in

addition to ClassNames if you want to provide more details about the

objects to detect.

You can specify these properties either when creating the object or as name-value

arguments when calling the detect function.

If you do not specify these properties at the time of object creation, you must specify at least the

ClassNamesname-value argument when you call thedetectfunction.If you specify these properties at object creation and when calling the

detectfunction, the values specified in thedetectfunction override the values set in the detector object.

Input Arguments

Name-Value Arguments

Properties

Object Functions

detect | Detect objects using Grounding DINO object detector |

Examples

References

[1] Liu, Shilong, Zhaoyang Zeng, Tianhe Ren, et al. “Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection.” In Computer Vision – ECCV 2024, vol. 15105, edited by Aleš Leonardis, Elisa Ricci, Stefan Roth, Olga Russakovsky, Torsten Sattler, and Gül Varol. Springer Nature Switzerland, 2025. https://doi.org/10.1007/978-3-031-72970-6_3.

Version History

Introduced in R2026a