minibatchqueue

Create mini-batches for deep learning

Description

Use a minibatchqueue object to create, preprocess, and manage

mini-batches of data for deep learning.

A minibatchqueue object iterates over a datastore to provide data in a

suitable format for training or prediction. The object prepares a queue of mini-batches that

are preprocessed on demand. Use a minibatchqueue object to automatically

convert your data to dlarray or gpuArray, convert data to a

different precision, or apply a custom function to preprocess your data. You can prepare your

data in parallel in the background.

You can manage your data in a custom training loop by using a

minibatchqueue object. You can shuffle the data at the start of each

training epoch using the shuffle function

and collect data from the queue for each training iteration using the next function. You

can check if any data is left in the queue using the hasdata function,

and reset the queue

when it is empty.

Creation

Syntax

Description

mbq = minibatchqueue(ds)minibatchqueue object from the input datastore

ds. The mini-batches in mbq have the same number

of variables as the results of read on the input datastore.

mbq = minibatchqueue(ds,numOutputs)minibatchqueue object from the input datastore

ds and sets the number of variables in each mini-batch. Use this

syntax when you use MiniBatchFcn to specify a mini-batch

preprocessing function that has a different number of outputs than the number of variables

of the input datastore ds.

mbq = minibatchqueue(___,Name=Value)minibatchqueue(ds,MiniBatchSize=64,PartialMiniBatch="discard") sets

the size of the returned mini-batches to 64 and discards any mini-batches with fewer than

64 observations.

Input Arguments

Input datastore, specified as a MATLAB® datastore or a custom datastore.

For more information about datastores for deep learning, see Datastores for Deep Learning.

Number of mini-batch variables, specified as a positive integer. By default, the number of mini-batch variables is equal to the number of variables of the input datastore.

You can determine the number of variables of the input datastore by examining the

output of read(ds). If your datastore returns a table, the number

of variables is the number of variables of the table. If your datastore returns a cell

array, the number of variables is the size of the second dimension of the cell array.

If you use the MiniBatchFcn name-value argument to specify a

mini-batch preprocessing function that returns a different number of variables than

the input datastore, you must set numOutputs to match the number of

outputs of the function.

Example: 2

Properties

This property is read-only.

Size of mini-batches returned by the next

function, specified as a positive integer. The default value is

128.

Tip

For best performance, if the input datastore ds has a

ReadSize property, such as an imageDatastore, then set the ReadSize property of the

input datastore and the MiniBatchSize property of the

minibatchqueue object to the same value. If the input datastore

ds has a MiniBatchSize property, such as an

augmentedImageDatastore, then set the MiniBatchSize

property of the input datastore and the MiniBatchSize property of

the minibatchqueue object to the same value.

Example: 256

Return or discard incomplete mini-batches, specified as "return"

or "discard".

If the total number of observations is not exactly divisible by

MiniBatchSize, the final mini-batch returned by the next function

can have fewer than MiniBatchSize observations. This property

specifies how any partial mini-batches are treated, using the following options:

"return"— A mini-batch can contain fewer thanMiniBatchSizeobservations. All data is returned."discard"— All mini-batches must contain exactlyMiniBatchSizeobservations. Some data can be discarded from the queue if there is not enough for a complete mini-batch.

Set PartialMiniBatch to "discard" if you

require that all of your mini-batches are the same size.

The minibatchqueue object stores this property as a character vector.

Example: "discard"

Data Types: char | string

This property is read-only.

Mini-batch preprocessing function, specified as "collate" or a

function handle.

The default value of MiniBatchFcn is

"collate". This function concatenates the mini-batch variables into

arrays. If the mini-batch variables have N dimensions, this function

concatenates the mini-batch variables along dimension N+1.

Use a function handle to a custom function to preprocess mini-batches for custom training. Doing so is recommended for one-hot encoding classification labels, padding sequence data, calculating average images, and so on. You must specify a custom function if your data consists of cell arrays containing arrays of different sizes.

If you specify a custom mini-batch preprocessing function, the function must

concatenate each batch of output variables into an array after preprocessing and return

each variable as a separate function output. The function must accept at least as many

inputs as the number of variables of the underlying datastore. The inputs are passed to

the custom function as N-by-1 cell arrays, where N

is the number of observations in the mini-batch. The function can return as many

variables as required. If the function specified by MiniBatchFcn

returns a different number of outputs than inputs, specify numOutputs

as the number of outputs of the function.

The following actions are not recommended inside the custom function. To reproduce

the desired behavior, instead, set the corresponding property when you create the

minibatchqueue object.

| Action | Recommended Property |

|---|---|

| Cast variable to different data type. | OutputCast |

| Move data to GPU. | OutputEnvironment |

Convert data to dlarray. | OutputAsDlarray |

Apply data format to dlarray variable. | MiniBatchFormat |

The minibatchqueue object stores this property as a character vector or a

function handle.

Example: @myCustomFunction

Data Types: char | string | function_handle

Since R2024a

Environment for fetching and preprocessing mini-batches, specified as one of these values:

"serial"– Fetch and preprocess data in serial."background"– Fetch and preprocess data using the background pool. The mini-batch preprocessing functionMiniBatchFcnmust support thread-based environments. For more information, see Run MATLAB Functions in Thread-Based Environment."parallel"– Fetch and preprocess data using parallel workers. The software opens a parallel pool using the default profile, if a local pool is not currently open. Non-local parallel pools are not supported. Using this option requires Parallel Computing Toolbox™.

To use the "background" or "parallel" options,

the input datastore must be subsettable or partitionable. Custom datastores must

implement the matlab.io.datastore.Subsettable class.

If you use the "background" or "parallel"

options, the order in which mini-batches are returned by the next

function varies, making training a network using the minibatchqueue

nondeterministic even if you use the deep.gpu.deterministicAlgorithms function.

The preprocessing environment defines how the MiniBatchFcn is

applied but does not affect further processing, including applying the effects of the

OutputCast, OutputEnvironment, OutputAsDlarray, and MiniBatchFormat.

Use the "background" option when your mini-batches require

significant preprocessing. If your preprocessing is not supported on threads, or if you

need to control the number of workers, use the "parallel" option. For

more information about the preprocessing environment, see Preprocess Data in the Background or in Parallel.

The minibatchqueue object stores this property as a character vector.

Before R2024a: To preprocess mini-batches in parallel, set

the DispatchInBackground property to 1

(true).

Data Types: char | string

This property is read-only.

Data type of each mini-batch variable, specified as "single",

"double", "int8", "int16",

"int32", "int64", "uint8",

"uint16", "uint32", "uint64",

"logical", or "char", a string array of these

values, a cell array of character vectors of these values, or an empty vector.

If you specify OutputCast as an empty vector, the data type of

each mini-batch variable is unchanged. To specify a different data type for each

mini-batch variable, specify a string array containing an entry for each mini-batch

variable. The order of the elements of this string array must match the order in which

the mini-batch variables are returned. This order is the same order in which the

variables are returned from the function specified by MiniBatchFcn.

If you do not specify a custom function for MiniBatchFcn, it is the

same order in which the variables are returned by the underlying datastore.

You must make sure that the value of OutputCast does not

conflict with the values of the OutputAsDlarray or OutputEnvironment properties. If you specify OutputAsDlarray as true or 1, check

that the data type specified by OutputCast is supported by dlarray. If you

specify OutputEnvironment as "gpu" or "auto"

and a supported GPU is available, check that the data type specified by

OutputCast is supported by gpuArray (Parallel Computing Toolbox).

The minibatchqueue object stores this property as a cell array of character

vectors.

Example: ["single" "single" "logical"]

Data Types: char | string

This property is read-only.

Flag to convert mini-batch variable to dlarray, specified as a

numeric or logical 1 (true) or

0 (false) or as a vector of numeric or logical

values.

To specify a different value for each output, specify a vector containing an entry

for each mini-batch variable. The order of the elements of this vector must match the

order in which the mini-batch variable are returned. This order is the same order in

which the variables are returned from the function specified by

MiniBatchFcn. If you do not specify a custom function for

MiniBatchFcn, it is the same order in which the variables are

returned by the underlying datastore.

Variables that are converted to dlarray have the underlying data

type specified by the OutputCast property.

Example: [1 1 0]

Data Types: logical

This property is read-only.

Data format of mini-batch variables, specified as a string scalar, character vector, string array, or a cell array of character vectors.

The mini-batch format is applied to dlarray variables only.

Non-dlarray mini-batch variables must have a

MiniBatchFormat of "".

To avoid an error when you have a mix of dlarray and

non-dlarray variables, you must specify a value for each output by

providing a string array containing an entry for each mini-batch variable. The order of

the elements of this string array must match the order in which the mini-batch variables

are returned. This is the same order in which the variables are returned from the

function specified by MiniBatchFcn. If you do not specify a custom

function for MiniBatchFcn, it is the same order in which the

variables are returned by the underlying datastore.

If you specify more dimensions than are present in the data, they are added as

singleton dimensions. For example, to add a singleton channel dimension to the data, add

a trailing "C" dimension.

If you specify a MiniBatchFormat of "" for a

mini-batch variable that is a dlarray with an existing format, the

minibatchqueue does not remove the existing format.

A data format is a string of characters, where each character describes the type of the corresponding data dimension.

The characters are:

"S"— Spatial"C"— Channel"B"— Batch"T"— Time"U"— Unspecified

For example, consider an array that represents a batch of sequences where the first,

second, and third dimensions correspond to channels, observations, and time steps,

respectively. You can describe the data as having the format "CBT"

(channel, batch, time).

You can specify multiple dimensions labeled "S" or "U".

You can use the labels "C", "B", and

"T" once each, at most. The software ignores singleton trailing

"U" dimensions after the second dimension.

For more information, see Deep Learning Data Formats.

The minibatchqueue object stores this property as a cell array of character

vectors.

Example: ["SSCB" ""]

Data Types: char | string

Hardware resource for mini-batch variables returned using the next

function, specified as one of the following values:

"auto"— Return mini-batch variables on the GPU if one is available. Otherwise, return mini-batch variables on the CPU."gpu"— Return mini-batch variables on the GPU."cpu"— Return mini-batch variables on the CPU.

To return only specific variables on the GPU, specify

OutputEnvironment as a string array containing an entry for each

mini-batch variable. The order of the elements of this string array must match the order

the mini-batch variable are returned. This order is the same order as the variables are

returned from the function specified by MiniBatchFcn. If you do not

specify a custom MiniBatchFcn, it is the same order as the

variables are returned by the underlying datastore.

Using a GPU requires Parallel Computing Toolbox. To use a GPU for deep

learning, you must also have a supported GPU device. For information on supported devices, see

GPU Computing Requirements (Parallel Computing Toolbox). If you choose the "gpu" option and Parallel Computing Toolbox or a suitable GPU is not available, then the software returns an

error.

The minibatchqueue object stores this property as a cell array of character

vectors.

Example: ["gpu" "cpu"]

Data Types: char | string

Object Functions

Examples

Use a minibatchqueue object to automatically prepare

mini-batches of images and classification labels for training using the trainnet

function or in a custom training loop.

Create a datastore. Calling read on auimds

produces a table with two variables: input, containing the image

data, and response, containing the corresponding classification

labels.

auimds = augmentedImageDatastore([100 100],digitDatastore); A = read(auimds); head(A,2)

ans =

input response

_______________ ________

{100×100 uint8} 0

{100×100 uint8} 0

Create a minibatchqueue object from auimds. Set

the MiniBatchSize property to 256.

The minibatchqueue object has two output variables: the images and

classification labels from the input and response

variables of auimds, respectively. Set the

minibatchqueue object to return the images as a formatted

dlarray on the GPU. The images are single-channel black-and-white

images. Add a singleton channel dimension by applying the format

"SSBC" to the batch. Return the labels as a

non-dlarray on the CPU.

mbq = minibatchqueue(auimds,... MiniBatchSize=256, ... OutputAsDlarray=[1 0], ... MiniBatchFormat=["SSBC" ""], ... OutputEnvironment=["gpu" "cpu"])

To obtain mini-batches from mbq to use in a custom training loop,

use the next function.

[X,Y] = next(mbq);

Preprocess data using a minibatchqueue with a custom mini-batch preprocessing function. The custom function rescales the incoming image data between 0 and 1 and calculates the average image.

Unzip the data and create a datastore.

unzip("MerchData.zip"); imds = imageDatastore("MerchData", ... IncludeSubfolders=true, ... LabelSource="foldernames");

Create a minibatchqueue.

Set the number of outputs to

2, to match the number of outputs of the function.Set the mini-batch size.

Preprocesses the data using the custom function

preprocessMiniBatchdefined at the end of this example. The custom function concatenates the image data into a numeric array, rescales the image between 0 and 1, and calculates the average of the batch of images. The function returns the rescaled batch of images and the average image.Apply the preprocessing function in the background by setting the

PreprocessingEnvironmentproperty to"background". You can preprocess your data in the background if your preprocessing function is supported for a thread-based environment.Do not convert the mini-batch output variables to a

dlarray.

mbq = minibatchqueue(imds,2,... MiniBatchSize=16,... MiniBatchFcn=@preprocessMiniBatch,... PreprocessingEnvironment="background",... OutputAsDlarray=false)

mbq =

minibatchqueue with 2 outputs and properties:

Mini-batch creation:

MiniBatchSize: 16

PartialMiniBatch: 'return'

MiniBatchFcn: @preprocessMiniBatch

PreprocessingEnvironment: 'background'

Outputs:

OutputCast: {'single' 'single'}

OutputAsDlarray: [0 0]

MiniBatchFormat: {'' ''}

OutputEnvironment: {'auto' 'auto'}

Obtain a mini-batch and display the average of the images in the mini-batch. A thread worker in the backgroundPool applies the preprocessing function.

[X,averageImage] = next(mbq); imshow(averageImage)

function [X,averageImage] = preprocessMiniBatch(XCell) X = cat(4,XCell{:}); X = rescale(X,InputMin=0,InputMax=255); averageImage = mean(X,4); end

Train a network using minibatchqueue to manage the processing of mini-batches.

Load Training Data

Load the digits training data and store the data in a datastore. Create a datastore for the images and one for the labels using arrayDatastore. Then, combine the datastores to produce a single datastore to use with minibatchqueue.

[XTrain,YTrain] = digitTrain4DArrayData; dsX = arrayDatastore(XTrain,IterationDimension=4); dsY = arrayDatastore(YTrain); dsTrain = combine(dsX,dsY);

Determine the number of unique classes in the label data.

classes = categories(YTrain); numClasses = numel(classes);

Define Network

Create a dlnetwork object.

net = dlnetwork;

Specify the layers and the average image value using the Mean option in the image input layer.

layers = [

imageInputLayer([28 28 1],Mean=mean(XTrain,4))

convolution2dLayer(5,20)

reluLayer

convolution2dLayer(3,20,Padding=1)

reluLayer

convolution2dLayer(3,20,Padding=1)

reluLayer

fullyConnectedLayer(numClasses)

softmaxLayer];Add the layers and initialize the network.

net = addLayers(net,layers); net = initialize(net);

Define Model Loss Function

Create the helper function modelLoss, listed at the end of the example. The function takes as input a dlnetwork object net and a mini-batch of input data X with corresponding labels Y, and returns the loss and the gradients of the loss with respect to the learnable parameters in net.

Specify Training Options

Specify the options to use during training.

numEpochs = 10; miniBatchSize = 128;

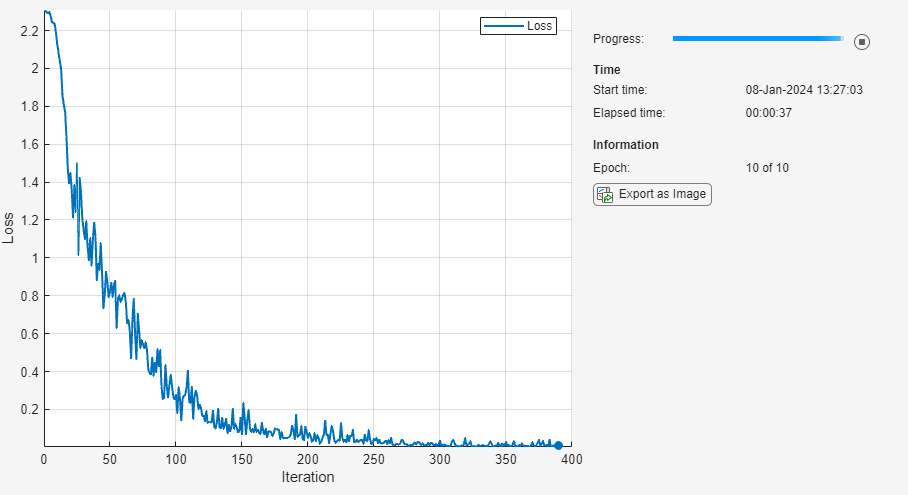

Visualize the training progress in a plot.

plots = "training-progress";Create the minibatchqueue

Use minibatchqueue to process and manage the mini-batches of images. For each mini-batch:

Discard partial mini-batches.

Use the custom mini-batch preprocessing function

preprocessMiniBatch(defined at the end of this example) to one-hot encode the class labels.Format the image data with the dimension labels

'SSCB'(spatial, spatial, channel, batch). By default, theminibatchqueueobject converts the data todlarrayobjects with underlying data typesingle. Do not add a format to the class labels.Train on a GPU if one is available. By default, the

minibatchqueueobject converts each output to agpuArrayif a GPU is available. Using a GPU requires Parallel Computing Toolbox™ and a supported GPU device. For information on supported devices, see GPU Computing Requirements (Parallel Computing Toolbox).

mbq = minibatchqueue(dsTrain,... MiniBatchSize=miniBatchSize,... PartialMiniBatch="discard",... MiniBatchFcn=@preprocessMiniBatch,... MiniBatchFormat=["SSCB",""]);

Train Network

Train the model using a custom training loop. For each epoch, shuffle the data and loop over mini-batches while data is still available in the minibatchqueue. Update the network parameters using the adamupdate function. At the end of each epoch, display the training progress.

Initialize the average gradients and squared average gradients.

averageGrad = []; averageSqGrad = [];

Calculate the total number of iterations for the training progress monitor.

numObservationsTrain = numel(YTrain); numIterationsPerEpoch = ceil(numObservationsTrain / miniBatchSize); numIterations = numEpochs * numIterationsPerEpoch;

Initialize the TrainingProgressMonitor object. Because the timer starts when you create the monitor object, make sure that you create the object close to the training loop.

if plots == "training-progress" monitor = trainingProgressMonitor(Metrics="Loss",Info="Epoch",XLabel="Iteration"); end

Train the network.

iteration = 0; epoch = 0; while epoch < numEpochs && ~monitor.Stop epoch = epoch + 1; % Shuffle data. shuffle (mbq); while hasdata(mbq) && ~monitor.Stop iteration = iteration + 1; % Read mini-batch of data. [X,Y] = next(mbq); % Evaluate the model loss and gradients using dlfeval and the % modelLoss helper function. [loss,grad] = dlfeval(@modelLoss,net,X,Y); % Update the network parameters using the Adam optimizer. [net,averageGrad,averageSqGrad] = adamupdate(net,grad,averageGrad,averageSqGrad,iteration); % Update the training progress monitor. if plots == "training-progress" recordMetrics(monitor,iteration,Loss=loss); updateInfo(monitor,Epoch=epoch + " of " + numEpochs); monitor.Progress = 100 * iteration/numIterations; end end end

Model Loss Function

The modelLoss helper function takes as input a dlnetwork object net and a mini-batch of input data X with corresponding labels Y, and returns the loss and the gradients of the loss with respect to the learnable parameters in net. To compute the gradients automatically, use the dlgradient function.

function [loss,gradients] = modelLoss(net,X,Y) YPred = forward(net,X); loss = crossentropy(YPred,Y); gradients = dlgradient(loss,net.Learnables); end

Mini-Batch Preprocessing Function

The preprocessMiniBatch function preprocesses the data using the following steps:

Extract the image data from the incoming cell array and concatenate the data into a numeric array. Concatenating the image data over the fourth dimension adds a third dimension to each image, to be used as a singleton channel dimension.

Extract the label data from the incoming cell array and concatenate along the second dimension into a categorical array.

One-hot encode the categorical labels into numeric arrays. Encoding into the first dimension produces an encoded array that matches the shape of the network output.

function [X,Y] = preprocessMiniBatch(XCell,YCell) % Extract image data from the cell array and concatenate over fourth % dimension to add a third singleton dimension, as the channel % dimension. X = cat(4,XCell{:}); % Extract label data from cell and concatenate. Y = cat(2,YCell{:}); % One-hot encode labels. Y = onehotencode(Y,1); end

Version History

Introduced in R2020bSetting the DispatchInBackground property is not recommended, set

the PreprocessingEnvironment property instead.

The PreprocessingEnvironment property provides the same

functionality and also allows you to use the backgroundPool for

preprocessing when you set PreprocessingEnvironment to

"background".

This table shows how to update your code:

| Not recommended | Recommended |

|---|---|

minibatchqueue(imds,DispatchInBackground=false)

(default) | minibatchqueue(imds,PreprocessingEnvironment="serial")

(default) |

minibatchqueue(imds,DispatchInBackground=true) | minibatchqueue(imds,PreprocessingEnvironment="parallel") |

There are no plans to remove the DispatchInBackground

property.

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Seleccione un país/idioma

Seleccione un país/idioma para obtener contenido traducido, si está disponible, y ver eventos y ofertas de productos y servicios locales. Según su ubicación geográfica, recomendamos que seleccione: .

También puede seleccionar uno de estos países/idiomas:

Cómo obtener el mejor rendimiento

Seleccione China (en idioma chino o inglés) para obtener el mejor rendimiento. Los sitios web de otros países no están optimizados para ser accedidos desde su ubicación geográfica.

América

- América Latina (Español)

- Canada (English)

- United States (English)

Europa

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)