sequenceInputLayer

Capa de entrada de secuencias

Descripción

Una capa de entrada de secuencias introduce datos secuenciales en una red neuronal y aplica normalización de datos.

Creación

Descripción

layer = sequenceInputLayer(inputSize,Name=Value)

Argumentos de entrada

Tamaño de la entrada, especificado como entero positivo o vector de enteros positivos.

Para entradas de secuencias de vectores,

inputSizees un escalar correspondiente al número de características.Para entradas de secuencias de imágenes 1D,

inputSizees un vector de dos elementos[h c], dondehes la altura de la imagen yces el número de canales de la imagen.Para entradas de secuencias de imágenes 2D,

inputSizees un vector de tres elementos[h w c], dondehes la altura de la imagen,wes la anchura de la imagen yces el número de canales de la imagen.Para entradas de secuencias de imágenes 3D,

inputSizees un vector de cuatro elementos[h w d c], dondehes la altura de la imagen,wes la anchura de la imagen,des la profundidad de la imagen yces el número de canales de la imagen.

Para especificar la longitud de secuencia mínima de los datos de entrada, utilice el argumento nombre-valor MinLength.

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Argumentos de par nombre-valor

Especifique pares de argumentos opcionales como Name1=Value1,...,NameN=ValueN, donde Name es el nombre del argumento y Value es el valor correspondiente. Los argumentos de nombre-valor deben aparecer después de otros argumentos. Sin embargo, el orden de los pares no importa.

En las versiones anteriores a la R2021a, utilice comas para separar cada nombre y valor, y encierre Name entre comillas.

Ejemplo: sequenceInputLayer(12,Name="seq1") crea una capa de entrada de secuencias con un tamaño de entrada de 12 y el nombre 'seq1'.

Longitud de secuencia mínima de los datos de entrada, especificada como un entero positivo. Durante el entrenamiento o la realización de predicciones con la red, si los datos de entrada tienen menos de MinLength unidades de tiempo, el software devuelve un error.

Cuando cree una red que reduzca la muestra de datos en la dimensión temporal, debe asegurarse de que la red admita los datos de entrenamiento y cualquier dato para predicción. Algunas capas de deep learning requieren que la entrada tenga una longitud de secuencia mínima. Por ejemplo, una capa de convolución 1D requiere que la entrada tenga al menos tantas unidades de tiempo como el tamaño del filtro.

A medida que las series temporales de datos secuenciales se propagan a través de una red, la longitud de la secuencia puede cambiar. Por ejemplo, las operaciones de submuestreo, como las convoluciones 1D, pueden generar datos en menos unidades de tiempo que su entrada. Esto significa que las operaciones de submuestreo pueden hacer que las capas posteriores de la red generen un error porque los datos tienen una longitud de secuencia más corta que la longitud mínima requerida por la capa.

Durante el entrenamiento o la creación de una red, el software verifica automáticamente que las secuencias de longitud 1 puedan propagarse a través de la red. Es posible que algunas redes no admitan secuencias de longitud 1 pero puedan propagar correctamente secuencias de longitudes más largas. Para comprobar que una red admite la propagación de los datos de entrenamiento y de predicción esperados, establezca la propiedad MinLength en un valor menor o igual que la longitud mínima de los datos y la longitud mínima esperada de los datos de predicción.

Sugerencia

Para evitar que las capas de convolución y agrupación cambien el tamaño de los datos, establezca la opción Padding de la capa en "same" o "causal".

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Normalización de datos para aplicar cada vez que los datos se propagan hacia adelante a través de la capa de entrada, especificada como una de las siguientes:

"zerocenter": restar la media especificada porMean."zscore": restar la media especificada porMeany dividir porStandardDeviation."rescale-symmetric": cambiar la escala de la entrada para que esté en el intervalo [-1, 1] empleando los valores mínimo y máximo especificados porMinyMax, respectivamente."rescale-zero-one": cambiar la escala de la entrada para que esté en el intervalo [0, 1] empleando los valores mínimo y máximo especificados porMinyMax, respectivamente."none": no normalizar los datos de entrada.Identificador de función: normalizar los datos empleando la función especificada. La función debe tener la forma

Y = f(X), dondeXes el dato de entrada y la salidaYes el dato normalizado.

Si los datos de entrada son de valores complejos y la opción SplitComplexInputs es 0 (false), la opción Normalization debe ser "zerocenter", "zscore", "none" o un identificador de función. (desde R2024a)

Antes de R2024a: Para introducir datos de valores complejos en la red, la opción SplitComplexInputs debe ser 1 (true).

Sugerencia

De forma predeterminada, el software calcula automáticamente las estadísticas de normalización cuando se utiliza la función trainnet. Para ahorrar tiempo durante el entrenamiento, especifique las estadísticas necesarias para la normalización y configure la opción ResetInputNormalization en trainingOptions como 0 (false).

El software aplica la normalización a todos los elementos de entrada, incluidos los valores de relleno.

El objeto SequenceInputLayer almacena la propiedad Normalization como un vector de caracteres o un identificador de función.

Tipos de datos: char | string | function_handle

Dimensión de normalización, especificada como una de las siguientes opciones:

"auto": si la opción de entrenamientoResetInputNormalizationes0(false) y especifica cualquiera de las estadísticas de normalización (Mean,StandardDeviation,MinoMax), normalice las dimensiones que coinciden con las estadísticas. De lo contrario, volver a calcular las estadísticas en el momento del entrenamiento y aplicar la normalización por canal."channel": normalización por canal."element": normalización por elemento."all": normalizar todos los valores utilizando estadísticas escalares.

El objeto SequenceInputLayer almacena la propiedad NormalizationDimension como un vector de caracteres.

Media para normalización cero a centro y de puntuación Z, especificada como un arreglo numérico o vacía.

Para entradas de secuencias de vectores,

Meandebe ser un vector deInputSizepor 1 de medias por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 2D,

Meandebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de medias por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 3D,

Meandebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 por 1 porInputSize(4)de medias por canal, un escalar numérico o[].

Para especificar la propiedad Mean, la propiedad Normalization debe ser "zerocenter" o "zscore". Si Mean es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula la media con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en0.

Mean puede ser de valores complejos. (desde R2024a) Si Mean es de valores complejos, la opción SplitComplexInputs debe ser 0 (false).

Antes de R2024a: Divida la media en partes reales e imaginarias y establezca la división de los datos de entrada en partes reales e imaginarias estableciendo la opción SplitComplexInputs en 1 (true).

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Soporte de números complejos: Sí

Desviación estándar utilizada para la normalización de puntuación Z, especificada como un arreglo numérico, un escalar numérico o vacía.

Para entradas de secuencias de vectores,

StandardDeviationdebe ser un vector deInputSizepor 1 de desviaciones estándar por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 2D,

StandardDeviationdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de desviaciones estándar por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 3D,

StandardDeviationdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 por 1 porInputSize(4)de desviaciones estándar por canal o un escalar numérico.

Para especificar la propiedad StandardDeviation, la Normalization debe ser "zscore". Si StandardDeviation es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula la desviación estándar con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en1.

StandardDeviation puede ser de valores complejos. (desde R2024a) Si StandardDeviation es de valores complejos, la opción SplitComplexInputs debe ser 0 (false).

Antes de R2024a: Divida la desviación estándar en partes reales e imaginarias y establezca la división de los datos de entrada en partes reales e imaginarias estableciendo la opción SplitComplexInputs en 1 (true).

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Soporte de números complejos: Sí

Valor mínimo para reescalar, especificado como arreglo numérico o vacío.

Para entradas de secuencias de vectores,

Mindebe ser un vector deInputSizepor 1 de medias por canal o un escalar numérico.Para entradas de secuencias de imágenes 2D,

Mindebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de mínimos por canal o un escalar numérico.Para entradas de secuencias de imágenes 3D,

Mindebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de mínimos por canal de 1 por 1 por 1 porInputSize(4)o un escalar numérico.

Para especificar la propiedad Min, la Normalization debe ser "rescale-symmetric" o "rescale-zero-one". Si Min es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula el valor mínimo con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en-1y0cuandoNormalizationes"rescale-symmetric"y"rescale-zero-one", respectivamente.

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Valor máximo para reescalar, especificado como arreglo numérico o vacío.

Para entradas de secuencias de vectores,

Maxdebe ser un vector deInputSizepor 1 de medias por canal o un escalar numérico.Para entradas de secuencias de imágenes 2D,

Maxdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de máximos por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 3D,

Maxdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 por 1 porInputSize(4)de máximos por canal, un escalar numérico o[].

Para especificar la propiedad Max, la Normalization debe ser "rescale-symmetric" o "rescale-zero-one". Si Max es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula el valor máximo con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en1.

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Marcador para dividir datos de entrada en componentes reales e imaginarios, especificado como uno de estos valores:

0(false): no dividir los datos de entrada.1(true): dividir los datos de entrada en componentes reales e imaginarios.

Cuando SplitComplexInputs es 1, entonces la capa produce el doble de canales que los datos de entrada que existen. Por ejemplo, si los datos de entrada tienen valores complejos con numChannels canales, entonces la capa produce datos con 2*numChannels canales, donde los canales 1 a numChannels contienen los componentes reales de los datos de entrada y los canales numChannels+1 a 2*numChannels contienen los componentes imaginarios de los datos de entrada. Si los datos de entrada son reales, entonces los canales numChannels+1 a 2*numChannels son todos cero.

Si los datos de entrada son de valores complejos y SplitComplexInputs es 0 (false), la capa pasa los datos de valores complejos a las siguientes capas. (desde R2024a)

Antes de R2024a: Para introducir datos de valores complejos en una red neuronal, la opción SplitComplexInputs de la capa de entrada debe ser 1 (true).

Para ver un ejemplo de cómo entrenar una red con datos con valores complejos, consulte Entrenar una red con datos con valores complejos.

Propiedades

Entrada de secuencias

Tamaño de la entrada, especificado como entero positivo o vector de enteros positivos.

Para entradas de secuencias de vectores,

InputSizees un escalar correspondiente al número de características.Para entradas de secuencias de imágenes 1D,

InputSizees un vector de dos elementos[h c], dondehes la altura de la imagen yces el número de canales de la imagen.Para entradas de secuencias de imágenes 2D,

InputSizees un vector de tres elementos[h w c], dondehes la altura de la imagen,wes la anchura de la imagen yces el número de canales de la imagen.Para entradas de secuencias de imágenes 3D,

InputSizees un vector de cuatro elementos[h w d c], dondehes la altura de la imagen,wes la anchura de la imagen,des la profundidad de la imagen yces el número de canales de la imagen.

Para especificar la longitud de secuencia mínima de los datos de entrada, utilice la propiedad MinLength.

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Longitud de secuencia mínima de los datos de entrada, especificada como un entero positivo. Durante el entrenamiento o la realización de predicciones con la red, si los datos de entrada tienen menos de MinLength unidades de tiempo, el software devuelve un error.

Cuando cree una red que reduzca la muestra de datos en la dimensión temporal, debe asegurarse de que la red admita los datos de entrenamiento y cualquier dato para predicción. Algunas capas de deep learning requieren que la entrada tenga una longitud de secuencia mínima. Por ejemplo, una capa de convolución 1D requiere que la entrada tenga al menos tantas unidades de tiempo como el tamaño del filtro.

A medida que las series temporales de datos secuenciales se propagan a través de una red, la longitud de la secuencia puede cambiar. Por ejemplo, las operaciones de submuestreo, como las convoluciones 1D, pueden generar datos en menos unidades de tiempo que su entrada. Esto significa que las operaciones de submuestreo pueden hacer que las capas posteriores de la red generen un error porque los datos tienen una longitud de secuencia más corta que la longitud mínima requerida por la capa.

Durante el entrenamiento o la creación de una red, el software verifica automáticamente que las secuencias de longitud 1 puedan propagarse a través de la red. Es posible que algunas redes no admitan secuencias de longitud 1 pero puedan propagar correctamente secuencias de longitudes más largas. Para comprobar que una red admite la propagación de los datos de entrenamiento y de predicción esperados, establezca la propiedad MinLength en un valor menor o igual que la longitud mínima de los datos y la longitud mínima esperada de los datos de predicción.

Sugerencia

Para evitar que las capas de convolución y agrupación cambien el tamaño de los datos, establezca la opción Padding de la capa en "same" o "causal".

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Normalización de datos para aplicar cada vez que los datos se propagan hacia adelante a través de la capa de entrada, especificada como una de las siguientes:

"zerocenter": restar la media especificada porMean."zscore": restar la media especificada porMeany dividir porStandardDeviation."rescale-symmetric": cambiar la escala de la entrada para que esté en el intervalo [-1, 1] empleando los valores mínimo y máximo especificados porMinyMax, respectivamente."rescale-zero-one": cambiar la escala de la entrada para que esté en el intervalo [0, 1] empleando los valores mínimo y máximo especificados porMinyMax, respectivamente."none": no normalizar los datos de entrada.Identificador de función: normalizar los datos empleando la función especificada. La función debe tener la forma

Y = f(X), dondeXes el dato de entrada y la salidaYes el dato normalizado.

Si los datos de entrada son de valores complejos y la opción SplitComplexInputs es 0 (false), la opción Normalization debe ser "zerocenter", "zscore", "none" o un identificador de función. (desde R2024a)

Antes de R2024a: Para introducir datos de valores complejos en la red, la opción SplitComplexInputs debe ser 1 (true).

Sugerencia

De forma predeterminada, el software calcula automáticamente las estadísticas de normalización cuando se utiliza la función trainnet. Para ahorrar tiempo durante el entrenamiento, especifique las estadísticas necesarias para la normalización y configure la opción ResetInputNormalization en trainingOptions como 0 (false).

El software aplica la normalización a todos los elementos de entrada, incluidos los valores de relleno.

El objeto SequenceInputLayer almacena esta propiedad como un vector de caracteres o un identificador de función.

Tipos de datos: char | string | function_handle

Dimensión de normalización, especificada como una de las siguientes opciones:

"auto": si la opción de entrenamientoResetInputNormalizationes0(false) y especifica cualquiera de las estadísticas de normalización (Mean,StandardDeviation,MinoMax), normalice las dimensiones que coinciden con las estadísticas. De lo contrario, volver a calcular las estadísticas en el momento del entrenamiento y aplicar la normalización por canal."channel": normalización por canal."element": normalización por elemento."all": normalizar todos los valores utilizando estadísticas escalares.

El objeto SequenceInputLayer almacena esta propiedad como un vector de caracteres.

Media para normalización cero a centro y de puntuación Z, especificada como un arreglo numérico o vacía.

Para entradas de secuencias de vectores,

Meandebe ser un vector deInputSizepor 1 de medias por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 2D,

Meandebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de medias por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 3D,

Meandebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 por 1 porInputSize(4)de medias por canal, un escalar numérico o[].

Para especificar la propiedad Mean, la propiedad Normalization debe ser "zerocenter" o "zscore". Si Mean es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula la media con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en0.

Mean puede ser de valores complejos. (desde R2024a) Si Mean es de valores complejos, la opción SplitComplexInputs debe ser 0 (false).

Antes de R2024a: Divida la media en partes reales e imaginarias y divida los datos de entrada en partes reales e imaginarias estableciendo la opción SplitComplexInputs en 1 (true).

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Soporte de números complejos: Sí

Desviación estándar utilizada para la normalización de puntuación Z, especificada como un arreglo numérico, un escalar numérico o vacía.

Para entradas de secuencias de vectores,

StandardDeviationdebe ser un vector deInputSizepor 1 de desviaciones estándar por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 2D,

StandardDeviationdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de desviaciones estándar por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 3D,

StandardDeviationdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 por 1 porInputSize(4)de desviaciones estándar por canal o un escalar numérico.

Para especificar la propiedad StandardDeviation, la Normalization debe ser "zscore". Si StandardDeviation es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula la desviación estándar con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en1.

StandardDeviation puede ser de valores complejos. (desde R2024a) Si StandardDeviation es de valores complejos, la opción SplitComplexInputs debe ser 0 (false).

Antes de R2024a: Divida la desviación estándar en partes reales e imaginarias y divida los datos de entrada en partes reales e imaginarias estableciendo la opción SplitComplexInputs en 1 (true).

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Soporte de números complejos: Sí

Valor mínimo para reescalar, especificado como arreglo numérico o vacío.

Para entradas de secuencias de vectores,

Mindebe ser un vector deInputSizepor 1 de medias por canal o un escalar numérico.Para entradas de secuencias de imágenes 2D,

Mindebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de mínimos por canal o un escalar numérico.Para entradas de secuencias de imágenes 3D,

Mindebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de mínimos por canal de 1 por 1 por 1 porInputSize(4)o un escalar numérico.

Para especificar la propiedad Min, la Normalization debe ser "rescale-symmetric" o "rescale-zero-one". Si Min es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula el valor mínimo con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en-1y0cuandoNormalizationes"rescale-symmetric"y"rescale-zero-one", respectivamente.

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Valor máximo para reescalar, especificado como arreglo numérico o vacío.

Para entradas de secuencias de vectores,

Maxdebe ser un vector deInputSizepor 1 de medias por canal o un escalar numérico.Para entradas de secuencias de imágenes 2D,

Maxdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 porInputSize(3)de máximos por canal, un escalar numérico o[].Para entradas de secuencias de imágenes 3D,

Maxdebe ser un arreglo numérico del mismo tamaño queInputSize, un arreglo de 1 por 1 por 1 porInputSize(4)de máximos por canal, un escalar numérico o[].

Para especificar la propiedad Max, la Normalization debe ser "rescale-symmetric" o "rescale-zero-one". Si Max es [], el software establece automáticamente la propiedad en el momento del entrenamiento o la inicialización:

La función

trainnetcalcula el valor máximo con los datos de entrenamiento, ignorando los valores de relleno, y utiliza el valor resultante.La función

initializey la funcióndlnetworkcuando la opciónInitializees1(true) establecen la propiedad en1.

Tipos de datos: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Esta propiedad o parámetro es de solo lectura.

Marcador para dividir datos de entrada en componentes reales e imaginarios, especificado como uno de estos valores:

0(false): no dividir los datos de entrada.1(true): dividir los datos de entrada en componentes reales e imaginarios.

Cuando SplitComplexInputs es 1, entonces la capa produce el doble de canales que los datos de entrada que existen. Por ejemplo, si los datos de entrada tienen valores complejos con numChannels canales, entonces la capa produce datos con 2*numChannels canales, donde los canales 1 a numChannels contienen los componentes reales de los datos de entrada y los canales numChannels+1 a 2*numChannels contienen los componentes imaginarios de los datos de entrada. Si los datos de entrada son reales, entonces los canales numChannels+1 a 2*numChannels son todos cero.

Si los datos de entrada son de valores complejos y SplitComplexInputs es 0 (false), la capa pasa los datos de valores complejos a las siguientes capas. (desde R2024a)

Antes de R2024a: Para introducir datos de valores complejos en una red neuronal, la opción SplitComplexInputs de la capa de entrada debe ser 1 (true).

Para ver un ejemplo de cómo entrenar una red con datos con valores complejos, consulte Entrenar una red con datos con valores complejos.

Capa

Nombre de la capa, especificado como un vector de caracteres o un escalar de cadena. Para entradas en forma de arreglo Layer, las funciones trainnet y dlnetwork asignan automáticamente nombres a las capas sin nombre.

El objeto SequenceInputLayer almacena esta propiedad como un vector de caracteres.

Tipos de datos: char | string

Esta propiedad o parámetro es de solo lectura.

Número de entradas de la capa. La capa no tiene entradas.

Tipos de datos: double

Esta propiedad o parámetro es de solo lectura.

Nombres de las entradas de la capa. La capa no tiene entradas.

Tipos de datos: cell

Esta propiedad o parámetro es de solo lectura.

Número de salidas de la capa, almacenado como 1. Esta capa solo tiene una salida.

Tipos de datos: double

Esta propiedad o parámetro es de solo lectura.

Nombres de salida, almacenados como {'out'}. Esta capa solo tiene una salida.

Tipos de datos: cell

Ejemplos

Cree una capa de entrada de secuencias con un tamaño de entrada de 12.

layer = sequenceInputLayer(12)

layer =

SequenceInputLayer with properties:

Name: ''

InputSize: 12

MinLength: 1

SplitComplexInputs: 0

Hyperparameters

Normalization: 'none'

NormalizationDimension: 'auto'

Incluya una capa de entrada de secuencias en un arreglo Layer.

inputSize = 12; numHiddenUnits = 100; numClasses = 9; layers = [ ... sequenceInputLayer(inputSize) lstmLayer(numHiddenUnits,OutputMode="last") fullyConnectedLayer(numClasses) softmaxLayer]

layers =

4×1 Layer array with layers:

1 '' Sequence Input Sequence input with 12 dimensions

2 '' LSTM LSTM with 100 hidden units

3 '' Fully Connected 9 fully connected layer

4 '' Softmax softmax

Cree una capa de entrada de secuencias para secuencias de imágenes RGB 224-224 con el nombre 'seq1'.

layer = sequenceInputLayer([224 224 3], 'Name', 'seq1')

layer =

SequenceInputLayer with properties:

Name: 'seq1'

InputSize: [224 224 3]

MinLength: 1

SplitComplexInputs: 0

Hyperparameters

Normalization: 'none'

NormalizationDimension: 'auto'

Entrene una red de LSTM de deep learning para la clasificación secuencia a etiqueta.

Cargue los datos de ejemplo de WaveformData.mat. Los datos son un arreglo de celdas de numObservations por 1 de secuencias, donde numObservations es el número de secuencias. Cada secuencia es un arreglo numérico de numTimeSteps por numChannels, donde numTimeSteps es el número de unidades de tiempo de la secuencia y numChannels es el número de canales de la secuencia.

load WaveformDataVisualice algunas de las secuencias en una gráfica.

numChannels = size(data{1},2);

idx = [3 4 5 12];

figure

tiledlayout(2,2)

for i = 1:4

nexttile

stackedplot(data{idx(i)},DisplayLabels="Channel "+string(1:numChannels))

xlabel("Time Step")

title("Class: " + string(labels(idx(i))))

end

Visualice los nombres de las clases.

classNames = categories(labels)

classNames = 4×1 cell

{'Sawtooth'}

{'Sine' }

{'Square' }

{'Triangle'}

Reserve datos para pruebas. Divida los datos en un conjunto de entrenamiento que contenga el 90% de los datos y en un conjunto de prueba que contenga el 10% restante. Para dividir los datos, use la función trainingPartitions, incluida en este ejemplo como un archivo de soporte. Para acceder al archivo, abra el ejemplo como un script en vivo.

numObservations = numel(data); [idxTrain,idxTest] = trainingPartitions(numObservations, [0.9 0.1]); XTrain = data(idxTrain); TTrain = labels(idxTrain); XTest = data(idxTest); TTest = labels(idxTest);

Defina la arquitectura de la red de LSTM. Especifique el tamaño de la entrada como el número de canales de los datos de entrada. Especifique una capa de LSTM con 120 unidades ocultas y que genere el último elemento de la secuencia. Por último, incluya una totalmente conectada con un tamaño de salida que coincida con el número de clases, seguida de una capa softmax.

numHiddenUnits = 120; numClasses = numel(categories(TTrain)); layers = [ ... sequenceInputLayer(numChannels) lstmLayer(numHiddenUnits,OutputMode="last") fullyConnectedLayer(numClasses) softmaxLayer]

layers =

4×1 Layer array with layers:

1 '' Sequence Input Sequence input with 3 dimensions

2 '' LSTM LSTM with 120 hidden units

3 '' Fully Connected 4 fully connected layer

4 '' Softmax softmax

Especifique las opciones de entrenamiento. Entrene usando el solver Adam con una tasa de aprendizaje del 0.01 y un umbral de gradiente de 1. Establezca el número máximo de épocas en 200 y redistribuya el orden de cada época. De forma predeterminada, el software se entrena en una GPU, si se dispone de ella. Utilizar una GPU requiere Parallel Computing Toolbox y un dispositivo GPU compatible. Para obtener información sobre los dispositivos compatibles, consulte GPU Computing Requirements (Parallel Computing Toolbox).

options = trainingOptions("adam", ... MaxEpochs=200, ... InitialLearnRate=0.01,... Shuffle="every-epoch", ... GradientThreshold=1, ... Verbose=false, ... Metrics="accuracy", ... Plots="training-progress");

Entrene la red de LSTM con la función trainnet. Para la clasificación, utilice la pérdida de entropía cruzada.

net = trainnet(XTrain,TTrain,layers,"crossentropy",options);

Clasifique los datos de prueba. Especifique el mismo tamaño de minilote utilizado para el entrenamiento.

scores = minibatchpredict(net,XTest); YTest = scores2label(scores,classNames);

Calcule la precisión de clasificación de las predicciones.

acc = mean(YTest == TTest)

acc = 0.8700

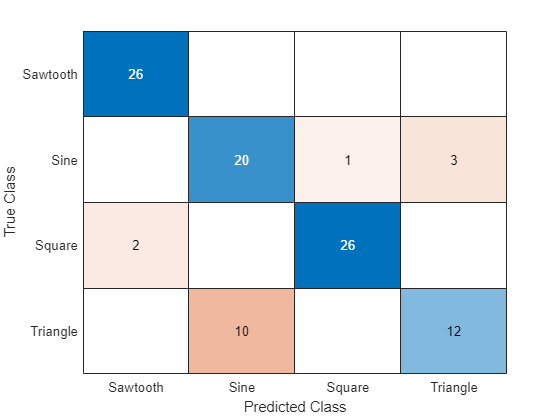

Muestre los resultados de la clasificación en una gráfica de confusión.

figure confusionchart(TTest,YTest)

Para crear una red de LSTM para la clasificación secuencia a etiqueta, cree un arreglo de capas que contenga una capa de entrada de secuencias, una capa de LSTM, una capa totalmente conectada y una capa softmax.

Establezca el tamaño de la capa de entrada de secuencias en el número de características de los datos de entrada. Establezca el tamaño de la capa totalmente conectada en el número de clases. No es necesario especificar la longitud de la secuencia.

Para la capa de LSTM, especifique el número de unidades ocultas y el modo de salida "last".

numFeatures = 12; numHiddenUnits = 100; numClasses = 9; layers = [ ... sequenceInputLayer(numFeatures) lstmLayer(numHiddenUnits,OutputMode="last") fullyConnectedLayer(numClasses) softmaxLayer];

Para ver un ejemplo de cómo entrenar una red de LSTM para una clasificación secuencia a etiqueta y clasificar nuevos datos, consulte Clasificación de secuencias mediante deep learning.

Para crear una red de LSTM para una clasificación secuencia a secuencia, utilice la misma arquitectura que para la clasificación secuencia a etiqueta, pero establezca el modo de salida de la capa de LSTM en "sequence".

numFeatures = 12; numHiddenUnits = 100; numClasses = 9; layers = [ ... sequenceInputLayer(numFeatures) lstmLayer(numHiddenUnits,OutputMode="sequence") fullyConnectedLayer(numClasses) softmaxLayer];

Para crear una red de LSTM para la regresión secuencia a uno, cree un arreglo de capas que contenga una capa de entrada de secuencias, una capa de LSTM y una capa totalmente conectada.

Establezca el tamaño de la capa de entrada de secuencias en el número de características de los datos de entrada. Establezca el tamaño de la capa totalmente conectada en el número de respuestas. No es necesario especificar la longitud de la secuencia.

Para la capa de LSTM, especifique el número de unidades ocultas y el modo de salida "last".

numFeatures = 12; numHiddenUnits = 125; numResponses = 1; layers = [ ... sequenceInputLayer(numFeatures) lstmLayer(numHiddenUnits,OutputMode="last") fullyConnectedLayer(numResponses)];

Para crear una red de LSTM para una regresión secuencia a secuencia, utilice la misma arquitectura que para la regresión secuencia a uno, pero establezca el modo de salida de la capa de LSTM en "sequence".

numFeatures = 12; numHiddenUnits = 125; numResponses = 1; layers = [ ... sequenceInputLayer(numFeatures) lstmLayer(numHiddenUnits,OutputMode="sequence") fullyConnectedLayer(numResponses)];

Para ver un ejemplo de cómo entrenar una red de LSTM para la regresión secuencia a secuencia y predecir nuevos datos, consulte Regresión de secuencia a secuencia mediante deep learning.

Puede hacer más profundas las redes de LSTM insertando capas de LSTM adicionales con el modo de salida "sequence" antes de la capa de LSTM. Para evitar un sobreajuste, puede insertar capas de abandono después de las capas de LSTM.

Para redes de clasificación secuencia a etiqueta, el modo de salida de la última capa de LSTM debe ser "last".

numFeatures = 12; numHiddenUnits1 = 125; numHiddenUnits2 = 100; numClasses = 9; layers = [ ... sequenceInputLayer(numFeatures) lstmLayer(numHiddenUnits1,OutputMode="sequence") dropoutLayer(0.2) lstmLayer(numHiddenUnits2,OutputMode="last") dropoutLayer(0.2) fullyConnectedLayer(numClasses) softmaxLayer];

Para redes de clasificación secuencia a secuencia, el modo de salida de la última capa de LSTM debe ser "sequence".

numFeatures = 12; numHiddenUnits1 = 125; numHiddenUnits2 = 100; numClasses = 9; layers = [ ... sequenceInputLayer(numFeatures) lstmLayer(numHiddenUnits1,OutputMode="sequence") dropoutLayer(0.2) lstmLayer(numHiddenUnits2,OutputMode="sequence") dropoutLayer(0.2) fullyConnectedLayer(numClasses) softmaxLayer];

Algoritmos

Las capas en un arreglo de capas o en una gráfica de capas pasan datos a las capas posteriores como objetos dlarray con formato. El formato de un objeto dlarray es una cadena de caracteres, en la que cada carácter describe la dimensión correspondiente de los datos. El formato consta de uno o más de estos caracteres:

"S": espacial"C": canal"B": lote"T": tiempo"U": sin especificar

Por ejemplo, puede representar datos de secuencia de vector como un arreglo 3D, en el cual la primera dimensión se corresponde con la dimensión del canal, la segunda dimensión con la del lote y la tercera con la del tiempo. Esta representación está en formato "CBT" (canal, lote, tiempo).

La capa de entrada de una red especifica la distribución de los datos que espera la red. Si tiene datos dispuestos de otra manera, especifique la distribución usando la opción de entrenamiento InputDataFormats.

Esta tabla describe la distribución esperada de los datos para una red neuronal con una capa de entrada de secuencias.

| Datos | Distribución |

|---|---|

| Secuencias de vectores | Funciones del objeto |

| Secuencias de imágenes 1D | Arreglos de h por c por t, donde h y c corresponden a la altura y el número de canales de las imágenes, respectivamente, y t es la longitud de la secuencia. |

| Secuencias de imágenes 2D | Arreglos de h por w por c por t, donde h, w y c corresponden a la altura, la anchura y el número de canales de las imágenes, respectivamente, y t es la longitud de la secuencia. |

| Secuencias de imágenes 3D | Arreglos de h por w por d por c por t, donde h, w, d y c corresponden a la altura, la anchura, la profundidad y el número de canales de las imágenes 3D, respectivamente, y t es la longitud de la secuencia. |

Para entradas de valores complejos a la red neuronal, cuando el argumento SplitComplexIputs es 0 (false), la capa pasa datos de valores complejos a las capas posteriores. (desde R2024a)

Antes de R2024a: Para introducir datos de valores complejos en una red neuronal, la opción SplitComplexInputs de la capa de entrada debe ser 1 (true).

Si los datos de entrada son de valores complejos y la opción SplitComplexInputs es 0 (false), la opción Normalization debe ser "zerocenter", "zscore", "none" o un identificador de función. Las propiedades Mean y StandardDeviation de la capa también admiten datos de valores complejos para las opciones de normalización "zerocenter" y "zscore".

Para ver un ejemplo de cómo entrenar una red con datos con valores complejos, consulte Entrenar una red con datos con valores complejos.

Capacidades ampliadas

La generación de código no permite pasar objetos

dlarraycon dimensiones (U) sin especificar a esta capa.Para entradas secuenciales de vector, el número de características debe ser una constante durante la generación de código.

Puede generar código C o C++ que no dependa de bibliotecas de deep learning de terceros para datos de entrada con cero, una, dos o tres dimensiones espaciales.

Para ARM® Compute e Intel® MKL-DNN, los datos de entrada deben contener cero o dos dimensiones espaciales.

La generación de código no admite el tipo de datos

'Normalization'especificado usando un identificador de función.La generación de código no admite las entradas complejas ni la opción

'SplitComplexInputs'.

Notas y limitaciones de uso:

La generación de código no permite pasar objetos

dlarraycon dimensiones (U) sin especificar a esta capa.Para generar código CUDA® o C++ mediante GPU Coder™, primero tiene que construir y entrenar una red neuronal profunda. Una vez se haya entrenado y evaluado la red, puede configurar el generador de código para que genere código y despliegue la red neuronal convolucional en plataformas que usen procesadores GPU NVIDIA® o ARM. Para obtener más información, consulte Deep Learning with GPU Coder (GPU Coder).

Puede generar código CUDA que sea independiente de bibliotecas de deep learning para datos de entrada con cero, una, dos o tres dimensiones espaciales.

Puede generar código que aproveche la biblioteca de la red neuronal profunda (cuDNN) NVIDIACUDA o la biblioteca de inferencias de alto rendimiento NVIDIATensorRT™.

La biblioteca cuDNN es compatible con secuencias de vectores y de imágenes 2D. La biblioteca TensorRT es solo compatible con secuencias de entrada de vectores.

Todas las dimensiones espaciales y de canal de la entrada deben ser constantes durante la generación del código. Por ejemplo,

Para entradas secuenciales de vector, el número de características debe ser una constante durante la generación de código.

Para entradas secuenciales de imágenes, la altura, la anchura y el número de canales deben ser una constante durante la generación de código.

La generación de código no admite el tipo de datos

'Normalization'especificado usando un identificador de función.La generación de código no admite las entradas complejas ni la opción

'SplitComplexInputs'.

Historial de versiones

Introducido en R2017bPara entradas de valores complejos a la red neuronal, cuando el argumento SplitComplexIputs es 0 (false), la capa pasa datos de valores complejos a las capas posteriores.

Si los datos de entrada son de valores complejos y la opción SplitComplexInputs es 0 (false), la opción Normalization debe ser "zerocenter", "zscore", "none" o un identificador de función. Las propiedades Mean y StandardDeviation de la capa también admiten datos de valores complejos para las opciones de normalización "zerocenter" y "zscore".

A partir de la versión R2024a, los objetos DAGNetwork y SeriesNetwork no están recomendados. En su lugar, utilice los objetos dlnetwork.

No está previsto eliminar el soporte para los objetos DAGNetwork y SeriesNetwork. Sin embargo, en su lugar se recomiendan los objetos dlnetwork, que tienen estas ventajas:

Los objetos

dlnetworkson un tipo de datos unificado que admite la creación de redes, la predicción, el entrenamiento integrado, la visualización, la compresión, la verificación y los bucles de entrenamiento personalizados.Los objetos

dlnetworkadmiten una gama más amplia de arquitecturas de red que puede crear o importar desde plataformas externas.La función

trainnetadmite objetosdlnetwork, lo que le permite especificar fácilmente funciones de pérdida. Puede seleccionar entre funciones de pérdida integradas o especificar una función de pérdida personalizada.Entrenar y predecir con los objetos

dlnetworksuele ser más rápido que los flujos de trabajoLayerGraphytrainNetwork.

Para convertir un objeto DAGNetwork o SeriesNetwork entrenado en un objeto dlnetwork, use la función dag2dlnetwork.

Las capas de entrada de secuencias en un objeto dlnetwork esperan datos dispuestos de manera diferente que las capas de entrada de secuencias en objetos DAGNetwork o SeriesNetwork. Para entradas de secuencias de vectores, las funciones de objetos DAGNetwork y SeriesNetwork esperan matrices de c por t, donde c es el número de características de las secuencias y t es la longitud de la secuencia. Para entradas de secuencias de vectores, las funciones de objeto dlnetwork esperan matrices de t por c, donde t es la longitud de la secuencia y c es el número de características de las secuencias.

A partir de la versión R2020a, trainNetwork ignora los valores de relleno al calcular las estadísticas de normalización. Esto significa que la opción Normalization en la sequenceInputLayer ahora hace que el entrenamiento sea invariable frente a las operaciones con datos; por ejemplo, la normalización 'zerocenter' ahora implica que los resultados del entrenamiento son invariables frente a la media de los datos.

Si entrena en secuencias con relleno, los factores de normalización calculados pueden ser distintos en versiones anteriores y pueden producir resultados diferentes.

A partir de la versión R2019b, sequenceInputLayer, de forma predeterminada, utiliza la normalización por canal para la normalización cero a centro. En versiones anteriores, esta capa utiliza la normalización por elemento. Para reproducir este comportamiento, establezca la opción NormalizationDimension de esta capa en 'element'.

Consulte también

trainnet | trainingOptions | dlnetwork | minibatchpredict | predict | scores2label | lstmLayer | bilstmLayer | gruLayer | sequenceFoldingLayer | flattenLayer | featureInputLayer | Deep Network Designer | exportNetworkToSimulink | Rescale-Symmetric 1D | Rescale-Zero-One 1D | Zerocenter 1D | Zscore 1D

Temas

- Clasificación de secuencias mediante deep learning

- Pronóstico de series de tiempo mediante deep learning

- Clasificación secuencia a secuencia mediante deep learning

- Classify Videos Using Deep Learning

- Visualizar activaciones de redes de LSTM

- Redes neuronales de memoria de corto-largo plazo

- Deep learning en MATLAB

- Lista de capas de deep learning

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Seleccione un país/idioma

Seleccione un país/idioma para obtener contenido traducido, si está disponible, y ver eventos y ofertas de productos y servicios locales. Según su ubicación geográfica, recomendamos que seleccione: .

También puede seleccionar uno de estos países/idiomas:

Cómo obtener el mejor rendimiento

Seleccione China (en idioma chino o inglés) para obtener el mejor rendimiento. Los sitios web de otros países no están optimizados para ser accedidos desde su ubicación geográfica.

América

- América Latina (Español)

- Canada (English)

- United States (English)

Europa

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)